AI safety and progress don’t have to be enemies

Some exciting personal news + my AI safety origin story

When I first joined OpenAI, our content filter had some serious problems. For example, it would flag phrases like “Chinese chicken salad” as sensitive text, just for using the word Chinese.1

Our customers were understandably upset at how unreliable the filter was: If our models and our safety tooling weren’t reliable enough, they couldn’t trust our AI for all sorts of useful tasks.

My experience of fixing the content filter was a taste of how safety and innovation don’t have to be enemies: Sometimes, to unlock the uses of AI that people want, we need to improve AI’s safety properties first.2

That’s one theme I hope to push on in my writing: that safety and innovation really don’t have to be enemies. In fact, if society fails at getting AI companies to invest appropriately into AI safety, we might squander all the incredible progress that’s possible from AI.

Safety and technological progress can very much complement each other. Which dovetails nicely with some news to share …

Some exciting personal news

I’m excited to share that I’ve been selected as a Roots of Progress fellow, writing about artificial intelligence’s risks, its upsides, and how we need to manage those risks to unlock the progress from the upsides.3 The content filter story is a good illustration of what I hope to convey in my fellowship—all the ways that AI safety and AI progress don’t have to be in tension.

If you’re a longtime reader, not too much will change: Every two weeks or so, I’ll bring you my independent writing and research about the future of AI and how to make these systems go better. Think of this like an AI insider’s take, minus the bias of working at an AI company—and still with complete freedom to say whatever I believe.4

The Substack continues to be totally free; my only ask is that if you find my writing helpful, you share it with another person or two. Consider also encouraging them to subscribe.

My core beliefs about AI and progress

Because I’m going to be writing more squarely about AI and progress, it might be helpful to know my core beliefs, which I expect to come back to frequently. In particular:

The AI future could be extremely great. Think personalized medicine, abundance of resources that allow us to eradicate poverty, you name it. Though I think the major AI companies are often too handwave-y when talking about how to deliver this future, I do believe that it can be real, if we’re conscientious about how we pursue it.5

But there are major challenges blocking the way to an excellent AI future. In particular, AI companies are racing to build technology that they absolutely do not understand and cannot control. There are some nascent ideas for how we might try to control these systems,6 but the follow-through on even basic safety practices is far from uniform, and the science just isn’t there yet.

Our options for making AI go better are larger and more varied than you might expect. Safety and innovation absolutely do not have to be enemies, and we can significantly limit AI safety risks without hindering innovation, if we’re willing to try hard and get creative.

In the coming weeks, I plan to return to these topics and give them the fuller treatment they deserve. These ideas will show up in a few different types of writing—explainers, original research, narrative descriptions of what it’s like to work in safety at a leading AI company, and so on.

What’s my deal anyway?

I’ve also realized that I never properly introduced myself on Substack, which might be helpful context as I begin this fellowship.

For those reading the blog for the first time, Hello!

I’m Steven Adler, a computer scientist with a background in economics, but more generally just interested in what makes the world tick.

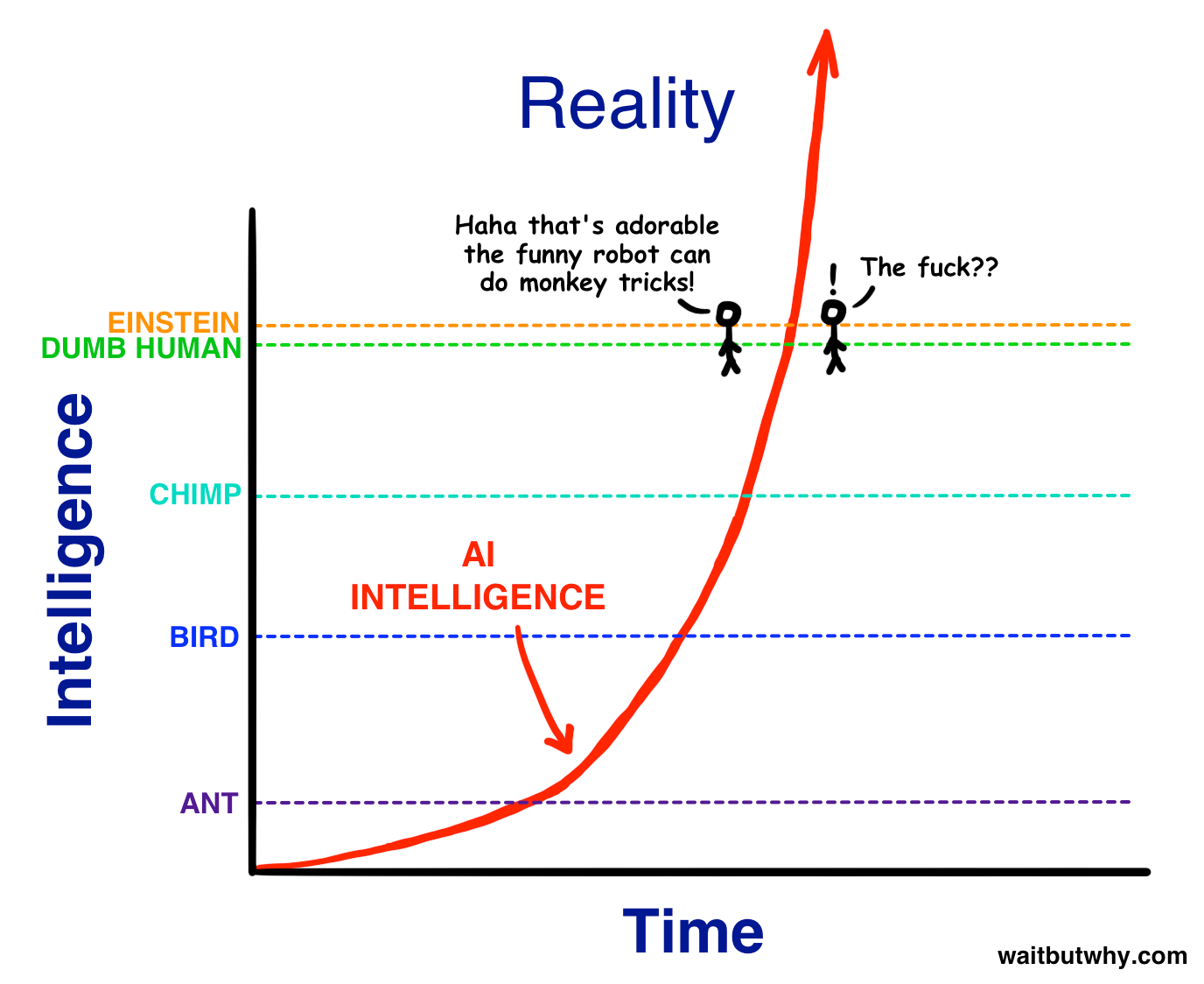

I got interested in AI risk topics (and just AI broadly) a little over a decade ago, when the combination of the book Superintelligence and the movie Ex Machina hit me with a pretty strong one-two punch. Namely, the world might get pretty crazy if AI companies succeed at building systems much smarter than even the smartest humans (essentially, the companies’ stated goals).7

Through some hustle and good luck, I moved from strategy consulting, to AI standards and governance, to ultimately leading various safety-related research and products at OpenAI, where I worked for four years from late 2020 through 2024.

Importantly, I am not the Steven Adler who is the drummer in Guns N’ Roses, but boy would that be an interesting dual-career.

What informs my writing?

I won’t bore you with too many details, but one great thing about joining OpenAI when I did is that I worked on essentially every risk-related topic you can imagine.8 Some of the topics that started out as “horizon-scanning” for future risks have unfortunately since become very “here and now.”

AI development is advancing remarkably quickly: Once, the team I led—OpenAI’s “dangerous capability evaluations”—built the industry’s first bioweapons-related AI evaluations;9 now I wonder how long until AI enables all sorts of new bioterrorism. Many AI researchers, like myself, are pretty terrified of where the field is heading, but feel powerless to individually stop it. (That’s where writing comes in: collective action, baby!)

One thing to know about my writing is that I hate hype and hyperbole, both negative and positive. I try to speak precisely and say exactly what I mean, which sometimes ends up with long hedge-y sentences (working on it!). I also think it’s virtuous when people change their minds, especially if they’re breaking from the positions of their “tribe”: If my beliefs change during the process of my writing, you will hear this loud and clear.

What does that mean for you? My commitment is to give you the honest, clear-eyed take on what’s happening in AI safety, without giving up on technological progress. Thanks for being along on this ride with me, provisionally now named Clear-Eyed AI.

Most of all, I want my writing to focus on the topics that matter and aren’t well-explained elsewhere. If you have ideas about AI topics you’d like to see me cover, please do drop me a comment below or get in touch.

Acknowledgements: Thank you to Dan Alessandro, Michael Adler, and Sam Chase for helpful comments and discussion. The views expressed here are my own and do not imply endorsement by any other party. All of my writing and analysis is based solely on publicly available information.

The people who’d trained the initial filter had of course done a good job for where the AI field was at the time, and with the limited resources given to them.

To improve the content filter, I took an afternoon to comb through a few thousand datapoints—and realized that the filter needed to be recalibrated. Basically, it was too sensitive, which was causing false-positives and crowding out the signs of actually harmful cases. No content filter is ever truly perfect or “fixed.” But tooling can still be significantly improved in terms of accuracy, latency, and other tradeoffs.

Of course, focusing just on what’s useful for customers won’t go far enough to solve the most important AI safety challenges—but it’s at least a start.

I’m honored to be among so many sharp writers; you can view the rest of the cohort here: https://rootsofprogress.org/fellows/

I’m excited to be joining a community of other writers who’ll push each other’s thinking, our clarity, and our presentation, but my full independence remains.

For one taste of what this future could be like, see Dario Amodei’s Machines of Loving Grace, though I take issue with its section on “Peace and governance.” See also Sam Altman’s “Moore’s Law for Everything.”

I particularly recommend Redwood Research’s blog, for both empirical and conceptual research on how we might control powerful AI systems.

Really, this was a one-two-three punch if you count Tim Urban’s series on powerful AI, which is still worth a read.

OpenAI changed extremely quickly during these days, even by the standards of other small companies. In my second week, a not-small portion of our senior safety staff walked out the door to form Anthropic. During my four years, I had roughly 10 different managers and 20 different managees. “Rolling with the changes” was definitely a dispositional requirement.

Needless to say, this work was not a one-man show! My team’s work on evaluations for AI capabilites related to bio-, chemical and nuclear weapons was done jointly with the consulting firm Gryphon Scientific, as well as with researchers from Carnegie Mellon and MIT.

Greeting from AI Safety South Africa (I'm the newsletter writer there)... keen to follow the writings!!

Congrats on the fellowship! Excited to see whats next long hedge-y sentences and all :)

Potential future topic: how much do various safety research efforts matter? Are some more neglected than others? Which might have disproportionate impact? Where are the bottlenecks (is it researchers, compute, support engineers, funding, something else, a combination)? Would you actively encourage others to join the field, why or why not? Who would be a good fit?